How to Overcome Apple’s Face ID Lockouts

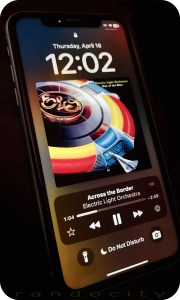

If you’re an Apple iPhone user, your phone likely utilizes Face ID biometrics to authenticate you and unlock your phone’s features. While this authentication system seems fine when it works, what happens when it fails? Not what you’d expect. Clearly, Apple didn’t think the failure design through. Let’s explore.

If you’re an Apple iPhone user, your phone likely utilizes Face ID biometrics to authenticate you and unlock your phone’s features. While this authentication system seems fine when it works, what happens when it fails? Not what you’d expect. Clearly, Apple didn’t think the failure design through. Let’s explore.

What is Face ID?

Face ID uses a series of hardware technologies including infrared, lidar and front facing cameras to scan your face and recognize you. It was touted by Apple as a better alternative to Touch ID, a fingerprint scanner, which was available on earlier iPhone models (and some current models too). Let’s just say that I prefer Touch ID over Face ID for various reasons, but I digress.

Face ID works fine under most circumstances, but there are conditions where Face ID could fail and prevent you from getting into your phone to perform critical diagnostic and/or troubleshooting features (or even backing it up onto your computer).

When you hold your phone to your face, the camera(s) scan your face for a number of key features which are then used to identify you no matter what angle or lighting (more or less) your face may be in. The reliability of this scanning technology is all dependent on the scanning hardware functioning 100% properly. We all know that hardware is prone to failure, either hard failure in the hardware itself or even soft failure by such things as poor lighting conditions, blocking the sensors or interference. Whatever the failure reason, Face ID has some important concerns and bugs that Apple needs to address.

Face ID Failure

This is the crux issue in Face ID that leads to all other related problems. Let’s begin with some relevant context. When you log into your favorite website or app, you’ll need credentials. Often, these consist of a username and password combination. There may be extended ways you can get authenticated beyond these two pieces of data, such as sending a one time SMS code, using an authenticator app, prompting you to press ‘accept’ in an app on another device or even using your voice, when calling into certain phone systems.

Typically, when one authentication type fails, developers offer one or more backup redundant authentication systems to help you get logged in. For example, if you’ve lost your password, sites allow you to reset your password. Resetting your password has you walk through various steps to identify that you own that account, usually by asking key questions like Name, Birth Date, Home Address or any other information that only you may know. You can often even call the support team at a website and ask them to help you get your password reset or cleared. These redundant designs prevent users from landing in dead end failures, as long as you have various other identifying data on hand to prove that you are you.

Not with Face ID… :(

Apple’s Bad Face ID Implementation

Apple created Face ID so that should Face ID fail to authenticate, it leads to a true dead end failure condition. There’s no additional way to authenticate with Face ID beyond that Face ID failure. When Face ID fails, it fails hard and it fails done. Even though the iPhone has the ability to access and request alternative identifying information, such a passcode, requesting and using your Apple ID credentials, requesting identity on other Apple devices and/or using an SMS code, NONE of these other authentication systems are used or available when Face ID fails! Nope. Apple just dead ends Face ID failures into nothingness. Face ID works or it doesn’t. When it doesn’t… yeah, here we open…

Pandora’s Box (aka Stolen Device Protection)

Apple Developers, in their infinite wisdom, have chosen to lock many critical troubleshooting and corrective features behind a successful Face ID verification. One might be thinking, “Well, that seems secure enough. So, what’s the problem?” Let me tell you.

One such feature locked behind Face ID verification is Device Protection under Face ID settings. If the Device Protection feature is toggled on, there are a number of things that Device Protection controls, including the ability (or not) to toggle Device Protection off. Another feature locked by Device Protection is the ability to use the Face ID Reset Data function, which becomes restricted and unusable when Face ID fails to verify.

This leads to a circular problem. Can’t verify with Face ID. Can’t reset Face ID’s biometric verification data to attempt to fix Face ID. Because the Reset Data function remains greyed out without a successful Face ID verification, you’re essentially locked out of the feature you need most to try to FIX Face ID. Not even Apple Support or the Apple Store can help you solve this dilemma.

Other critical features like a local Device Reset or a local Device Wipe are also locked behind Face ID when Device Protection is enabled. Once again, critical troubleshooting and corrective steps are eliminated simply because Face ID fails to verify.

How might this impact you?

There are a number of scenarios where Face ID failing to authenticate your face may affect you:

- You cannot attempt to fix Face ID if Device Protection is enabled and Face ID fails to authenticate.

- You cannot reset the device locally because Face ID fails to authenticate.

- You cannot wipe the device to factory settings because Face ID fails to authenticate.

- You can’t use many apps that rely on Face ID to authenticate you when Face ID fails.

And no, the passcode doesn’t help you here and neither does your Apple ID password. If you receive a used iPhone from a family member (or even from a used phone seller) and you want to wipe it and set it up new for yourself, you cannot do this. When Face ID was originally enabled, that means the phone will need to see the original owner’s face to unlock Face ID to enable a local factory reset and wipe options in settings.

This is particularly problematic when the device is shipped cross country and the Face ID person is not in close proximity. To solve, this means shipping the device back to the person, having them perform the wipe and then having the device shipped back. Let me just say here that SHIPPING IS EXPENSIVE! Best to avoid this back and forth shipping.

The unnecessary shipping can be avoided in used phone purchases if the seller fully wipes the device to factory defaults before shipping. However with family members, they often simply turn old phones off and forget about them. Then hand them over just as they are to other family members, leaving situations like the above.

But Wait, There’s More!!!

Apple does offer a feature that’s fairly sledge-hammery, but this feature will let you at least get the phone out of your Apple ID account as long as you own more than one iOS or MacOS device and the device exists in the “Find My” app OR you have a computer with a browser and can log into the iCloud.com website. The “Find My” app offers a critical security feature that allows you to remotely wipe your Apple devices to factory defaults, even if the device is not currently in your possession. The device will, however, need to be connected to the Internet to receive and perform the request. If the device is in your possession, there’s no problem at all. If it’s lost or stolen, it all depends on timing. If you can see the device is active and pinging in the “Find My” app, then you can wipe it.

When you buy a new iPhone, the first time you connect it to your Apple ID, this action automatically enrolls the device in the “Find My” app for tracking. You don’t need to manually add devices to this app. Apple often makes these things simple and easy for new users. This is one of those apps that “just works.”

The good thing about the “Find My” app wipe is that because it’s a remote wipe using another device on your Apple ID (usually performed because a device is lost or stolen, but can be used for other purposes), this remote wipe works around all security on the device itself, including Face ID. Meaning, no matter what security settings you have set up on the device, the remote wipe will do its thing without needing to touch the device at all.

There are some important things to consider about using “Find My” app to wipe your device, though. This wipe does as it sounds. It wipes all settings, data and information from the specific device. If you have photos or videos on the device, these will be wiped. The wipe feature erases everything back to factory defaults with the exception of ONE critical thing.

The wiped device will be placed into an Activation Lock (Cloud Locked) status. This means that in order to reactivate and use the device again, the original owner must type in their Apple ID credentials (login and password) to unlock the device for reuse. Once that’s done, the device is basically as if it’s brand new and is available to be set up again as though it were a new phone.

There are a few downsides, though. The wipe is just wee bit sledge-hammery when all you’re needing to do is something simple, like clearing out Face ID data. Because the “Find My” app lists ALL of your devices in a single convenient location, you will need to make absolutely sure that you have selected the correct device BEFORE sending out the wipe instruction. Don’t make a mistake here! Choosing the wrong device name means it will wipe that device instead. Make sure you name your devices properly for easy identification and double check that you’ve selected the correct device! You don’t want to wipe you or your spouse’s current phone accidentally. Caution is in order here.

However, the “Find My” wiping feature does mean that you can at least get your iPhone back into a workable state to begin setting it up again. If your phone has been backed up recently, then you won’t really lose all that much other than the time it takes wipe and restore the phone from your most recent backup, assuming you can get the phone back or you have it in your possession. You are backing up your phone’s data regularly, right?

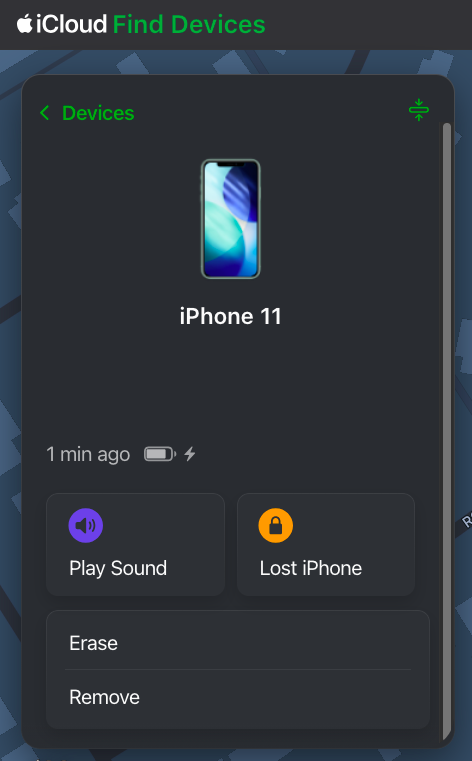

How to Send a Remote Wipe Request to an iPhone

To wipe a device remotely using “Find My”, you will need to log into the “Find My” app on a different device under the Apple ID where that device is associated. You can do this on an iPad, iPhone, MacBook or via iCloud.com in a web browser. You don’t necessarily need to have another Apple device, but you will need access to a computer or phone with Internet access and a web browser to log into iCloud.com using the Apple ID credentials associated with the iPhone. For this purposes of this article, iCloud.com is used to show how to find and use the “Find My” feature. These options are also available in the “Find My” app on iOS devices.

To wipe a device remotely using “Find My”, you will need to log into the “Find My” app on a different device under the Apple ID where that device is associated. You can do this on an iPad, iPhone, MacBook or via iCloud.com in a web browser. You don’t necessarily need to have another Apple device, but you will need access to a computer or phone with Internet access and a web browser to log into iCloud.com using the Apple ID credentials associated with the iPhone. For this purposes of this article, iCloud.com is used to show how to find and use the “Find My” feature. These options are also available in the “Find My” app on iOS devices.

Since iCloud.com is a website, it’s possible Apple may redesign this website from time to time. That means that the image shown here in this article may change. The “Find My” feature may remain available, but may be located in a different place and/or may present with a different user interface. If the user interface is different from what’s shown here, you will need to look for the “Find My” app in iCloud, open it and then determine how to get to and use the described features.

After logging into iCloud.com using the correct credentials, scroll down to the bottom of the page and you will see an array of available apps. One of the apps is “Find My”. Click it to open up the “Find My” app.

Once you have opened the “Find My” app, you will be given a number of options for each device when selected, including Play Sound, Lost Phone, Erase and Remove, at least for an iPhone. Different devices may be given more or less options, depending on the device type. The “Find My” web app may update a bit more slowly than the app available on an iPhone, iPad or Mac. You may need to wait a few minutes for the “Find My” web version to refresh fully for all of your devices to show active and online. You will be unable to send any remote commands to a device until that device is shown as online.

Once you have selected the device and opened it up, it will show you a control panel like the one shown above. The Erase option is the option you will need to remotely wipe the device. Again, make sure you have selected the correct device. I’d suggest playing a tone on the device using the “Find My” app to ensure that the correct device is chosen. However, if you’re erasing a device that is not in your possession (i.e., it’s stolen), don’t play a tone. You don’t want to alert the thieves that you’re looking at the device. In a stolen device case, check to see where the device is in the world on the map. If it’s not where you expect it to be, then you’ve selected the correct device for a wipe.

If you’re trying to solve the issue presented above and you have the device in your hand (or another person has it in their hand while you talk to them), play a tone to confirm the correct device. Once confirmed, send the erase command to the phone. If another person has the device in their hand, make sure they are talking to you on a separate device from the one that is about to be wiped. Once you send the wipe command, the phone will stop functioning. They will need to be on a different device talking to you for the duration of the wipe.

Remote Erasure — How does it work?

Once the Erase command is successfully sent to the device, the phone will immediately begin requesting to enter passwords with various popups. These popups indicate the command has been received by the phone. Ignore the popups and do nothing about them, though. At this point, the phone will need to be turned off and turned back on. Once the phone has been rebooted, the wipe will begin. The iPhone screen will turn black, a white Apple logo will appear and a small progress bar will appear just below the Apple logo.

The phone may reboot a couple of times during this wipe process, each with progress bars. Once the wipe process has completed, the device will go into Activation Lock (Cloud Locked) mode. When the phone is powered on after the wipe has completed, the phone may require setting up WiFi access before moving forward. However, at some point, you will be prompted to enter the Apple ID and Password of the person who originally owned the phone. This is a Cloud Lock. Entering these credentials will remove the Cloud Lock status and put the phone into a factory default setup mode to begin setting the phone up as if it were brand new. Once the Cloud Lock status has been removed, the phone is no longer associated with the Cloud Locked Apple credentials in any way.

Stolen Device Protection vs Cloud Locking

Here’s just a little bit of commentary about the Stolen Device Protection feature itself. I’m not exactly sure what Face ID’s Device Protection feature is actually trying to solve, honestly. Apple has already previously developed Cloud Locking. The Cloud Lock system is an effective deterrent for theft or loss. Should someone manage to get past the passcode and into your iPhone, they can’t wipe your device because the wipe process requires logging out of iCloud using the user’s Apple ID credentials and password. The wipe will stop and fail if the correct credentials are not input during the wipe process.

Unfortunately, Apple has taken this wiping problem one step further with Face ID’s Stolen Device Protection. With Device Protection enabled, not only do you still need to enter your Apple ID credentials during portions of the wipe to take it out of iCloud, but Face ID must function to even begin the wiping process.

Again, because Face ID dead end fails, this can lead to the possibility of never being able to actually remove a device from your Apple account in the expected way, by wiping the phone on the phone. Maybe you bought a new iPhone because your Face ID system stopped recognizing you. That’s fine and all, but now you cannot remove that device from your Apple ID because Face ID prevents wiping the device from the device itself. Yeah, this is Apple not thinking things through.

That is, until or unless you realize that the “Find My” app allows for remote wiping your device(s), which does solve the above Face ID dilemma. Just be cautious when selecting a device to wipe. Don’t pick the wrong one.

Conclusion

So, yes, there you have it. There is definitely a bug in Apple’s Face ID authentication system that can prevent you from locally wiping or locally fixing your Apple device. An authentication bug that should be considered oversight by Apple’s developers. However, all it is not lost. Apple has provided us with a sledgehammer approach in the “Find My” app to workaround this bug, as long as you have other devices that can initiate the wipe inside of “Find My” and assuming that the “Find My” feature is working correctly on the remote device. Lots of things need to line up properly for the “Find My” device wipe to function.

If you have run into similar issues regarding Face ID failures, please sound off in the comments. If this article was helpful to you, please follow, like and leave a comment below.

↩︎

Fediverse Reactions

Trump: America’s first illegally elected President

America is about to make a massive mistake! The U.S. Constitution has a lot of words. I know, it’s seems difficult to read. It’s not. Let’s explore the constitutional problems with Donald Trump as President Elect and why the 2024 Election results are invalid. Let’s explore.

America is about to make a massive mistake! The U.S. Constitution has a lot of words. I know, it’s seems difficult to read. It’s not. Let’s explore the constitutional problems with Donald Trump as President Elect and why the 2024 Election results are invalid. Let’s explore.

The 14th Amendment to the U.S. Constitution is a curious beast. It says a lot of things, but none more important than section 3 below:

No person shall be a Senator or Representative in Congress, or elector of President and Vice-President, or hold any office, civil or military, under the United States, or under any State, who, having previously taken an oath, as a member of Congress, or as an officer of the United States, or as a member of any State legislature, or as an executive or judicial officer of any State, to support the Constitution of the United States, shall have engaged in insurrection or rebellion against the same, or given aid or comfort to the enemies thereof. But Congress may by a vote of two-thirds of each House, remove such disability.

Highlights have been added for brevity and clarity. The 14th Amendment describes ALL of the qualifications necessary for a person to run for any elected office. Failing any of these requirements means that the candidate is INELIGIBLE to run. Note this previous statement because it becomes very important to America and the 2024 Election.

January 6th

… is widely understood as an insurrection. It fits all of the qualifications for it. It was violent. It was an uprising. The uprising was against the United States government. The uprising was intended to subvert the will of the voters. The uprising was intended to aid Mr. Trump in remaining in office past his term. The uprising was fomented and incited by Mr. Trump at the ellipse the morning of January 6th.

More than this, Mr. Trump decided to sit idly by for 4 hours and do nothing to stop it. This is aiding and abetting… the very definition of what’s written in the 14th Amendment.

As a result, even Congress was forced to consult with the Supreme Court on whether what Mr. Trump did was considered an insurrection. The problem is, it doesn’t matter what the SCOTUS believes, it only matters what Congress believes.

If Congress was required to ask another authority in the government about Trump’s conduct, then it’s clear that Congress is unclear on Trump’s eligibility. This means that Trump is and should be considered ineligible to run for office until this question is 100% cleared by using the 14th’s prescribed VOTING mechanism. Trump is now considered a “grey area” candidate at this point.

The 14th Amendment is CRYSTAL CLEAR on what is required to ensure any “grey area” candidate is eligible. “But Congress may by a vote of two-thirds of each House, remove such disability.”

It says it right here in the 14th’s text. Congress may choose by a 2/3rds vote to lift such a disability and make any such “grey area” candidate eligible to run for office. Until such a vote, the candidate must remain ineligible.

Congress failed to vote on this! (IMPORTANT)

Keep in mind that nowhere in the 14th Amendment does it allow or authorize the SCOTUS to chime in on such and make any definitive choices. The ONLY choice given by the 14th is to have Congress VOTE on it. Any questions involving any candidate’s eligibility automatically means that a Congressional VOTE is REQUIRED.

2024 Election

What does all of the above mean for the 2024 election? Let’s break it down.

Since Donald Trump was then and is now an ineligible candidate per the 14th Amendment and because Congress failed to vote on Trump’s eligibility because of his “grey area” status, that means Trump is and remains an illegal candidate on the ballot.

Trump shouldn’t been on the ballot because he’s ineligible due to the failure of Congress to follow the procedures outlined in the 14th Amendment; procedures that are quite crystal clear in their intent and in their required actions.

But the SCOTUS…

The SCOTUS doesn’t play into this. Nowhere in the 14th does it say that the SCOTUS can intervene and offer up their opinion. Think of it this way. The SCOTUS’s opinion is tantamount to you having committed a crime, then asking a judge if they believe you are innocent of the crime.

If a judge gives you their opinion as “innocent”, that doesn’t mean you aren’t still responsible for having committed that crime. That judge’s opinion absolutely DOES NOT let you off of the hook for having committed that crime. It’s just an opinion. What matters is what the LAW says is required.

If the law says you should be indicted and charged, that judge’s opinion absolutely 100% WILL NOT get you out of that predicament. You will still be held accountable for what you did in whatever way the law requires. Talking to a judge absolutely does not get you a “get out of jail free” card no matter what that judge says. The same for the SCOTUS. An opinion is just an opinion. It is 100% not binding. What is binding is what the written law requires.

Election 2024 continued…

Because Trump is a “grey area” candidate due to his involvement in January 6th AND because Congress had questions involving his conduct on January 6th AND because Congress did not rule on Trump’s eligibility, Trump remains ineligible to run for ANY office… period.

Until or unless Congress votes on Trump’s eligibility as the 14th Amendment requires, Trump remains ineligible to be on any ballot.

Let’s break this down further…

Because Trump was on the 2024 ballot as an ineligible candidate, the results of the election are, likewise, invalid. This means that the 2024 Election technically has no winner because the Election results must be tossed out in their entirety. Trump is not the President Elect because the 14th Amendment is clear on this matter. Trump is ineligible.

Kamala Harris is not the winner either because the entire election results must be tossed out entirely. It’s a fraudulent election because an ineligible candidate participated.

Re-Run the Election

Because the 2024 Election results are 100% invalid, the election must be rerun with 100% ELIGIBLE candidates. Trump can only be made eligible by a Congressional vote in both houses. That would need to be done BEFORE any Re-Run election. If the vote fails or if Congress is unable to hold such a vote, then Trump must remain off of the Rerun election ballot.

Accepting an Illegal President

If America moves forward with this illegal Presidential charade, then we are complicit in breaking down the will of the Constitution. The Constitution is completely clear on how candidate eligibility matters need to be handled.

If we accept Donald Trump as President Elect under these ineligible circumstances and accept the results of this invalid election, America will have voted in its first ILLEGAL President in U.S. History. It also means the Constitution is null and void. Democracy is dead if we proceed here.

Technicality

Technicalities matter and this one matters a great deal to both America and the United States Constitution. Do we stand with the Constitution and uphold it? OR, do we stand with Donald Trump and vote against our Constitution?

Because Congress made a procedural faux pas in this matter, it is on Congress to right this wrong. In fact, it’s on the entirety of the United States Government leaders to stand up for our Constitution and call the 2024 Election invalid and require a re-run election.

Let’s do the right thing here! OR, there’s no stepping back from this precipice! We are at America’s decision gate and most of us don’t even know it. There is a short amount of time to correct our course here, but it requires ALL Americans and all American Leaders to bring attention to this situation regardless of their partisanship.

Trump can still be America’s President, but it must be voted on in the Constitutionally correct way.

↩︎

America has made the wrong choice

Much to the chagrin of so many, Donald Trump has managed to win the election and become President, but not by a landslide. Donald Trump will spin it that way, but it isn’t true. One thing that is absolutely true, however, is that lies are winning over truth. Lies are how Trump won. Let’s explore.

Over-Analyzed

The news media outlets are now over-analyzing Trump’s win, pulling out all manner of random talking heads to say whatever they think. These randoms, most of the time, make zero sense with their inane arguments as to why Trump won.

For example, one of MSNBC’s talking head randoms claimed that Trump won because Democrats called the Trump base biggots and racists. I don’t recall any Democrat candidates saying this. Media outlets may have been making these arguments, along with many talking heads on media outlets, but I can’t recall a single Democrat candidate saying this. Feel free to point how how I’m wrong in the comments below, however.

One thing that is absolutely certain is that media outlets have been calling Trump a biggot and racist. This is true. It seems if these talking heads are so out of touch that they can’t recognize the difference between a news media outlet and a Democrat, they’re living in a cave. They’re also clearly not thinking.

Spinning Lies

Trump won, not because Democrats did or said anything wrong. Trump won because Trump and his cabal are able to spew lies about the Democrats that seemed truthful to the masses. That’s the gist of it at all. At the heart of the matter, it comes down to lies over truth.

People seemed to have forgotten one cliché, but very salient and prophetic quote:

If it seems to good to be true, it probably is.

No where is this quote more applicable than towards Donald Trump. Trump is a conman, first and foremost. The word “conman” has a lot of negative connotations, but let’s break it down. The “con” prefix in “conman” is short for “confidence”.

This means that a “conman” gains your “confidence” by saying things that, you guessed it, “seem too good to be true”, leading to an initial skepticism. Then, the conman follows up those skeptical words with words that try to allay your fears about his statement being “too good to be true.” In a very real sense, leading you down a primrose path made of up plastic flowers. They’re pretty and may seem real, just don’t get too close.

He then hauls out 2, 3 or more shills who all backup his claims as the conman. These shills are paid accomplices who say whatever the conman wants because they were paid off. Think of them as paid actors.

These shills who come rolling out are intended to prove to you that the conman’s words are truthful. Some people may still be skeptical at this point, unless some of the shills are people like a mayor, your police leader or even your pastor. That’s where a conman’s words get a huge boost to seem “truthful”, even though they’re still all just a pack of lies.

The Wrong Decision

It seems that the vast majority of America has now been conned by a man who has enlisted far too many confidence shills like Elon Musk and Ron DeSantis and Greg Abbott and Rupert Murdock (and sons) and this list goes on and on and on.

Gullible sorts believe what Trump says because they believe what his shills are saying. That rationale is, “if all of these people are saying the same thing, then it must be true.” Yet, shills are still shills perpetuating lies on behalf of the conman. Unfortunately, when so many are perpetrating the same lies, it can be difficult to undersatnd that these lies are, very much in fact, still lies.

Let’s not get bogged down into Trump’s continual lies.

4 years of Hell

America is literally in for 4 years of hell. You will begin to realize that the lies that Trump and his cabal have told are in fact truly lies. That you were, in fact, deceived by a legitimate conman. Unfortunately, you’re going to learn this fact in the most difficult ways possible. Let’s list out each of what Trump has made claims of “fixing” and then realize why he will be unable to actually do any of it. In fact, the economy will get far, far worse under Trump’s leadership than where we are right at this moment in time.

Trump’s lies:

- Trump will bring down inflation and restore what was the economy prior to Biden. This is both a lie and it is false. Trump has no ability to steer America’s economy to make it any better than it is right now. In fact, with Trump’s continual chaos, firings, distractions and age-related mental degeneration, America’s economy will actually get worse, not better. Expect higher rents, higher food costs, higher gas prices and higher inflation than where we are today. It could even get to twice higher than today.

- The Middle East and Russia wars will end. Lies. Trump has no influence to control or change the outcomes of what’s going on the Middle East or with Russia. The best he can do is attempt to befriend Putin again and play hardball with Netanyahu, neither of which will affect those wars in any way at all. Worse, though, is that for all of his meddling in these international affairs, he will see to it that No. 1 above absolutely comes true… a worsening economy, more inflation and higher prices. The only way that the Ukraine war ends under Trump is for the Ukraine to be handed over to Russia on a silver platter.

- Trump will deport many, many immigrants. Lies, mostly. Not in that he will deport people, but in HOW and WHO he will deport. While he may be able to round up immigrants and “deport” them, there’s no way to know what exactly he means by this. He could attempt to round up not only recent immigrants, but all immigrants of any nationality regardless of their legal citizenship status or how long they have lived in America. Then, deport ALL of them. MAGA isn’t just about “Making America Great Again”, it’s about “Making America White Again.”

- Trump will fix the border. Lies. Trump has no way to fix the border. Putting up a border wall will cost taxpayers trillions more in money. Instead of reducing taxes, you’re going to be paying even more to help put up that wall along the border… a wall, that incidentally, will do nothing to stem the tide of immigrants. Of course, Trump will lie about all of this and tell you it is working. Because you don’t live near the border, you will have to take his word at face value. Living near the border will tell you the real truth, but you don’t care about that because you don’t live near there.

- Trump will make the country better. Lies. Trump only cares about one person. Himself. Trump will make the country better for HIM only. Not for you, not for your family and not for anyone else you know. This ties back to No. 1 above, but it is markedly different because Trump wants to be President for not only the power he derives, but for the money he gains and the money he can skim from just about everywhere he can find. This, of course, costs the economy money and you, as a taxpayer, money. Trump wants to turn the country into a playground for the rich with those at the bottom becoming pawns for his pleasure, convenience and for the work he can make you do. He wants you to work even longer hours, get even less money in wages and effectively become slave labor to him. Well done voting for him.

Third World Nation

These above are the top things Trump has promised. However, he has also promised political retribution to his opponents. We’ll have to see how his political retribution ends, but that likely means completely getting rid of the Democrats entirely.

That above and his promise of toppling the constitution. These here are additional promises he has made. The problem is, toppling the constitution means toppling America. Toppling America means putting America into not only a great depression, but the biggest depression that America has ever seen. It also means America will cease to exist as a country and will instantly become a third world nation. The dollar will cease to have value across the globe. Think of what the peso is worth in Mexico and devalue the dollar by even more than that.

If the constitution is toppled, your rights are gone. Your home is gone. Your land is gone. Your money is gone. Everything you hold dear is absolutely, 100% completely and utterly gone. Without a constitution to protect your rights, you have no rights at all.

Trump can come in and seize everything you have and everything you own. Trump can then rearrange the state boundaries, remove voting entirely, disband the military or rearrange the United States to his will and effectively cause one of the most catastrophic changes to what was formerly known as America.

If we get to this point, and yes it is entirely possible we will in less than 4 years, Trump will have to begin selling off parts of American territory to continue to fund his own lifestyle choices, that and to avoid becoming part of a coup. That probably won’t work. Don’t bet that if we get to this point that Mexico, Russia, China and North Korea won’t eye our land for conquest. World War III? Forget that. We won’t be able to even defend our nation once Trump brings us to this point. Our military will be so disjointed and disbanded, that we won’t have a military to speak of. Nukes? They probably won’t even work.

You Voted For This!

This is exactly what you voted for. You bought into Trump’s con. You think he’ll make America better, but that’s not going to happen. He’s too old and too unstable to produce any results. Trump has no moral compass nor ethical boundaries. Trump is, as some media outlets have stated, transactional. He does things on a whim, but also does some planned things with as minimal planning as possible. When something is planned, it’s usually planned and executed in hours, with absolutely not enough time to judge the consequences.

Trump offers no real plans. He isn’t a planner. He’s not even a doer. He’s a leach and a conman. He takes and takes and takes. He almost never gives back.

This is what you wanted? No? It’s absolutely what you’re going to get.

The next 4 years are going to be a living hell for not just Democrats, but equally for all Republicans. You wanted to open Pandora’s Box. Well, now you did. Now we get to see exactly what’s inside of Pandora’s box… and no, it’s not going to be pretty.

↩︎

Inside Job? Suspicious Shooting at Trump’s Butler Rally

As the investigation into what went on at Trump’s Butler, Pennsylvania rally progresses, one question that should be on everyone’s mind is, “Was this shooting was an inside job?” There are too many suspect things involving this shooting, least of all the 20 year old suspect.

As the investigation into what went on at Trump’s Butler, Pennsylvania rally progresses, one question that should be on everyone’s mind is, “Was this shooting was an inside job?” There are too many suspect things involving this shooting, least of all the 20 year old suspect.

Disclaimer: This article is intended to be speculative in its nature and in handling this sensitive topic as this situation is still unfolding. Not all information is yet available. This article is not intended to accuse or defame any individual person or entity stated. This article is only intended to ask the pertinent questions that need to be asked based on what we know so far.

Let’s explore.

What happened?

Trump, as he does on the campaign trail, was holding a political rally in Butler, Pennsylvania on July 13th. Trump was at the microphone at the time speaking. Several shots rang out and Trump’s ear was allegedly grazed by a bullet along with a bullet striking and killing one rally attendee.

Secret Service then stepped in to protect the former President by attempting to hold him down. Before that happened, he defiantly raised a fist, displaying his bloody ear.

Election and All Stops

Trump will pretty much stop at nothing to secure the Presidency. He’s already made that abundantly clear with the January 6th event using his failed attempt at halting the counting of the state electoral vote. Then, as a part of that, setting up and fomenting a violent riot that followed at the Capital.

The question then remains, “Was the shooting at Trump’s Butler, Pennsylvania rally an inside job?” Let’s see if we can at least understand better why this is even a question.

Inside Job?

Again, this is a speculative article in its nature. It’s not intending to accuse anyone. It is intended solely to ask questions. Let’s get right to the meat of this article. There are so many suspicious activities involving this Butler, Pennsylvania Trump shooting that we need to understand them all. Let’s make a bulleted list:

- The CIA and FBI are primo at sniffing out early notifications of possible mass shootings. Yet, they missed this one? No social media ramblings at all?

- The Secret Service didn’t scope out the crowd in advance or even during the event?

- The Secret Service didn’t wand or otherwise run the crowd through metal detectors?

- The Secret Service seemingly didn’t have close building rooftops covered to prevent someone from taking pot shots at Donald Trump or the crowd? Hmm…

- The Secret Service was able to neutralize the shooter in seconds? Even more, hmm…

- Donald Trump fist pumps for the camera with a bleeding ear after a supposed gunshot. Yeah, that’s a big hmm…

- That a 20 year old is able to circumvent Secret Service’s security measures? Okay, hmmm.

- Donald Trump’s sends up a defiant fist while Secret Service is attempting to secure him. Uh, nokay.

- Let’s label this what this really is, another a mass shooting.

Let’s break this all down. Neither the CIA nor the FBI had any advanced notice of a possible shooter at this rally? That’s suspect. It’s like no one said anything prior to the rally. I find it difficult to believe that a 20 year old doesn’t have a social media presence, let alone have potential discussions about what went on. Alone, this one miss isn’t a problem if other safeguards catch a would-be assailant. They didn’t.

It’s unclear if the Secret Service was able to properly vet the entire crowd through Magnetometers or by wand device, but it seems that at least one person slipped through that process. The question then is, what happened here? How did this happen? Did this assailant remain far enough away from the event to not need to be vetted by Secret Service?

The building where the shooter camped was not too far from the rally site to fire a weapon reliably, but also it was also not too close to be detected, apparently. I’m guessing that because of this distance, the Secret Service didn’t require vetting people at this distance? Really? Below is the alleged building involved where the suspected 20 year old shooter allegedly camped.

Unfortunately, there is yet another metric that supports that this may have been an inside job. It seems highly unlikely that this building wouldn’t have been fully secured (even the rooftop) in advance of the rally by the Secret Service, including either having SS staff on the rooftop or at least standing outside of this building location to prevent trespassing.

Clearly, at least according to various news media reports, the shooter was neutralized moments (seconds?) after the shots rang out by a Secret Service agent, with one of the suspect’s earlier shots allegedly grazing Trump’s ear while killing a rally participant.

Suspicious

This whole building security situation is highly suspicious. How did a shooter manage to get on top of a building that should have been fully secured by the Secret Service? That either means that Secret Service was not securing that building properly or, this is an even worse thought, that Secret Service ignored the individual as they traversed onto the rooftop with their weapons.

Yet, clearly the Secret Service was able to neutralize this rooftop target in moments after the shots rang out? Secret Service apparently had that rooftop covered as it has been reported that a Secret Service sniper was able to locate, take aim and shoot at the assailant all within a matter of seconds. All of this action by the Secret Service implies that this building was, indeed, being fully covered by Secret Service protection. Yet, a 20 year old shooter can manage to get past that security, pull out a weapon, take a position on the roof and begin firing shots… all without being detected? Yeah, this author is not buying that idea.

The question remains, how would such a shooter manage to get past Secret Service and traverse onto a rooftop of a building that was apparently so well secured? Yes, this is A really big and suspicious question.

Inside Job Part II

As we delve into all of the above, we come to realize that either the Secret Service was highly inept at performing their security responsibilities (doubtful) or that this was an inside job.

Donald Trump and all of his closest confidants, particularly the ones participating in the rally, would likely have known of the Secret Service’s plans (or at least many of them) to secure the rally site. Information that could potentially “leak”.

If the rooftop building was so well covered so as to neutralize the target in moments, it’s inconceivable that a random person could randomly and with extreme luck happen upon a Secret Service blind spot to infiltrate and make their way onto the rooftop all without being seen or, more importantly, heard by Secret Service. Again, either Secret Service was ignoring this situation or it seems likely that something else was going on here. It’s all too suspicious and this author is not buying it.

Why not a Lone Wolf Shooter?

For a person to cart a weapons bag onto a rooftop that is being actively secured by Secret Service and not be seen or heard doing this is infinitesimally small. By infinitesimally, I mean the chances are next to zero. Clearly, if Secret Service were able to neutralize this shooter as rapidly as they did, the Secret Service had coverage on or near the building involved. Having that level of coverage implies that the shooter may have had help to get around the Secret Service detail.

This means that the shooter would have needed to get help to find a blind spot in Secret Service coverage, a blind spot that only an inside person would know. That means that the shooter may have been fed this information prior to traversing onto the rooftop, which allowed that person to avoid being detected by Secret Service, arm up and then take the shots.

Fist Defiance

One highly suspect issue during this whole event is Trump’s behavior immediately following the shot where he realized a bullet had allegedly struck him. Instead of going into panic mode as one might do, he decided to stand up with a fist in the air for a photo opportunity. It’s almost like Trump knew that the threat was over, somehow. That’s not a normal behavior after having been shot and nearly assassinated. Instead, he should have wanted to leave the rally as quickly as possible to secure the safety of his person. Instead, he seemed willing to defy his Secret Service detail as they attempted to pull him to safety.

Seriously, who thinks about photo opportunities when someone is shooting a weapon in your general direction?

Trump involved?

This is a question, not an accusation. We already know that Trump is not avert in taking drastic and unusual steps to make a point and in his attempt to win and/or hold the Presidency. Just look at January 6th as an example. Disclaimer: this is question that must be asked. It is not intended to accuse.

Democrat Involvement?

Clearly, Trump’s sycophant Republicans are going to play the lone-wolf must-be-a-Democrat card, even though it has been confirmed that the now deceased 20 year old shooter was a registered Republican. It also seems like almost every time something happens involving Trump, it is inevitably Trump who had a hand in its outcome. Yet, Trump (and his sycophants) will inevitably and hypocritically blame it all on the Democrats in one breath, while calling for unity in another. Because of all of the suspicious goings on involving this shooting, the entire situation does seem highly suspicious and dubious.

Trump’s Repeated Calls for Violence

Trump has repeatedly used veiled words and rhetoric to call his “troops” into action. What troops you ask? Well, obviously troops like the Proud Boys and the Oath Keepers and other Republicans. Trump is willing to and understands that through various broad rhetoric, it will call “his” troops into action. His veiled words tell these various groups to do things up to and including performing violence when necessary. Again, look at January 6th as a prime example. It’s not the only example (e.g., NY trial), though.

As a person with the amount of public sway that Donald Trump holds, his seemingly innocent words are put into sometimes threatening and violent actions by his followers. Just look at the rhetoric that Trump used against various judges and various court staff and what ultimately resulted onto those people after Trump’s words were unleashed. His words even went so far as to force a judge to issue a gag order against Trump during his New York Trial. Rudy Giuliani, one of Trump’s biggest hired sycophant lackeys at the time of Trump’s Presidency, was even sued and lost a defamation suit against election workers as he spread many lies about those workers involving the 2020 election; lies that fomented negative action towards these workers by Trump followers. Rudy Giuliani has even been disbarred as a lawyer over his 2020 election lies.

While what Rudy Giuliani said didn’t personally result in threats and violence against those people, at least not by Giuliani’s own hand, it did foment many, many uncomfortable situations for these election workers by others from within Trump’s “troop” camp; well-meaning workers who were simply hired to do a job during an election. Fomenting these kinds of unchecked threats and violence against others is clearly something Trump (and his lawyers) shouldn’t be doing. And yet, perhaps one third to one half of the nation want to reelect this man?

Fomenting violence, regardless of how veiled the rhetoric may be, is still fomenting violence. It’s no wonder that eventually Trump’s own veiled words would turn back around on him. Trump likely knew that this would be an eventuality. Based on all of the suspicious goings on above, it seems more likely that what occurred may have been an inside job, taken as a step to quell that violence by potentially doing it as an inside job; one that looks like it isn’t? We just don’t know.

Unfortunately, one rally goer died as a result.

Is Trump, the Secret Service or his inner circle involved?

There’s no way to know, yet. But, this question must be asked and answered. This situation is still unfolding and we don’t yet have all of the details. This article is written solely using logical speculation based on the details known at the moment of this article and it is based solely on the logical deduction around these rather dubious situations so far. This article is not intended to accuse anyone. It is simply here to ask pertinent questions. After all, even as infinitesimal as it is, a 20 year old lone-wolf shooter managed to slip through Secret Service’s grasp and gain access to an unsecured rooftop to take shots at Trump? It’s not likely he did this without help.

Is it possible it could be done? Yes. Is it probable? No.

Secret Service is way too meticulous in planning its security details involving the former President. Either the Secret Service lapsed in its responsibilities for security at this rally or this was an inside job designed to circumvent the Secret Service.

Unfortunately, even if it was an inside job, the Government will likely squash all evidence of that to make it seem like it was strictly a lone-wolf operation. Only time will tell if the Government will be honest with us over what really happened.

↩︎

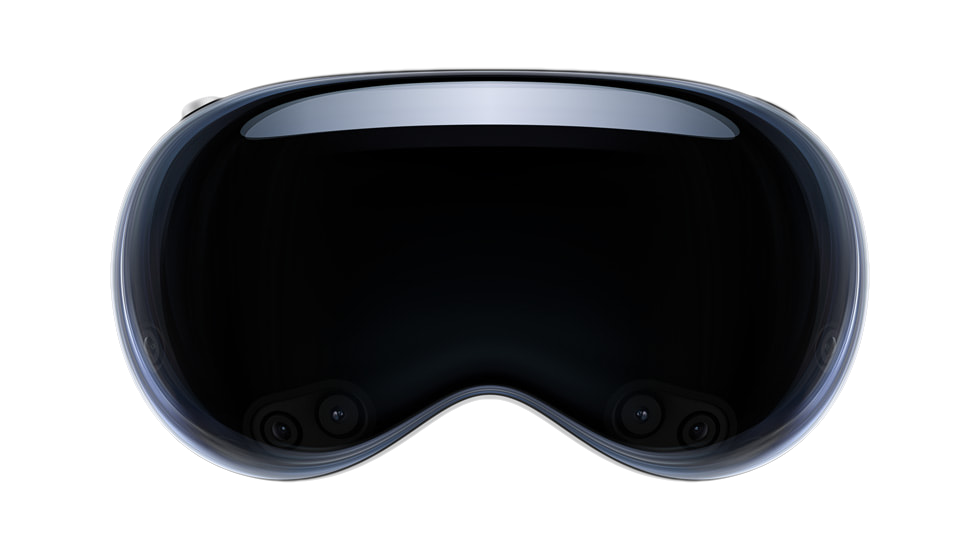

Is Apple’s Vision Pro worth the money?

Let me preface this article by saying that this is not intended review the Apple Vision pro. Instead, it is intended as an analysis of Apple’s technology and the design behind the Apple Vision Pro headset. The Vision Pro’s hefty price tag also begins at $3500 and goes up from there depending on selected features. Let’s explore.

Let me preface this article by saying that this is not intended review the Apple Vision pro. Instead, it is intended as an analysis of Apple’s technology and the design behind the Apple Vision Pro headset. The Vision Pro’s hefty price tag also begins at $3500 and goes up from there depending on selected features. Let’s explore.

Price Tag vs Danger Target

The first elephant in the room to address with this Virtual Reality (VR) headset is its price tag. Because there is presently only one model of this headset, anyone who sees you wearing it knows the value of this headset instantly. This means that if you’re seen out and about in public wearing one, you’ve made yourself a target not simply for theft, but for a possible outright mugging. Thieves are emboldened when they know you’re wearing a $3500 device on your person. Because the Vision Pro is a relatively portable device, it would be easy to scoop up the entire device and all of its accessories in just a few seconds and walk off with it.

Like wearing an expensive diamond necklace or a Rolex watch, these items flaunt wealth. Likewise, so does the Vision Pro. It says that you have disposable income and wouldn’t really mind the loss of your $3500 device. While that previous statement might not be exactly true, it does have grains of truth in it. If you’re so wealthy that you can plop down $3500 for a Vision Pro, you can likely afford to buy another one should it go missing.

However, if you’re considering investing in a Vision Pro VR headset, you’d do well to also invest in a quality insurance policy that covers both loss from theft and damage both intentional and accidental. Unfortunately, a loss policy won’t cover any injuries you might sustain from a mugging. Be careful and remain alert when wearing a Vision Pro in public spaces.

The better choice is not wear the headset in public spaces at all. Don’t use it on trains, in planes, at Starbucks, sitting in the lobby of airports or even in hotel lobbies. For maximum safety, use the Vision Pro device in the privacy and safety of your hotel room OR in the privacy and safety of your own home. Should you don this headset on public transportation to and from work, expect to get not only looks from people around you, expect to attract thieves looking to take it from you, potentially forcibly. With that safety tip out of the way, let’s dive into the design of this VR headset.

What exactly is a VR headset useful for?

While Apple is attempting to redefine what a VR headset is, they’re not really doing a very good job at it, especially for the Vision Pro’s costly price tag. To answer the question that heads up this section, the answer is very simple.

A VR headset is simply a strap on 3D display. That’s it. That’s what it is. That’s how it works. Keep reading much further down for the best use cases of 3D stereoscopic displays. The resolution of the display, the eye tracking, the face tracking, the augmented reality features, these are all bells and whistles that roll out along side of the headset and somewhat drive the price tag. The reality is as stated, a VR headset is simply a strap on video display, like your TV or a computer monitor. The only difference between a TV screen or monitor is that a VR headset offers 3D stereoscopic visuals. Because of the way the lenses are designed on VR headset, the headset can use its each-eye-separate-display feature to project flat screens that appear to float convincingly both at a distance and at a scale that appears realistically large, some even immensely large like an IMAX screen in scale.

These VR flat screens float in the vision like a floating displays featured in many futuristic movies. However, a VR headset is likewise a personal, private experience. Only the wearer can partake in the visuals in the display. Everyone else around you has no idea what you’re seeing, doing or experiencing…. except they will know when using the Vision Pro because of one glaring design flaw involving the audio system (more on this below). Let’s simply keep in mind that all that a VR headset boils down to is a set of goggles containing two built-in displays, one for each eye; displays which produce a stereoscopic image. Think of any VR headset as the technological equivalent of a View Master, that old 1970s toy with paper image discs (reels) and a pull down lever to switches images.

How the video information is fed to those displays is entirely up to each VR headset device.

Feeding the Vision Pro

For the Vision Pro, this device is really no different than any of a myriad of other VR headsets on the market. Apple wants you to think that theirs is “the best” because Apple’s Vision Pro is “brand new” and simply because it’s brand new, this should convince you that it is somehow different. In reality, the Vision Pro doesn’t really stand out. Oh sure, it utilizes some newer features, such as better eye tracking and easier hand gestures, but that’s interface semantics. We’ll get into the hand gesture problems below. For the Vision Pro’s uses, getting easy access to visual data from the Vision Pro is made as simple as owning an iPad. This ease is to the credit of Apple, but this ease also exists because the iPad already exists allowing that iPad ease to be slipped into and then leveraged and utilized by the Vision Pro.

In reality, the Vision Pro OS might as well be an iPad attached to a strap-on headset. That’s really how the Vision Pro has been designed. The interface on the iPad is already touch capable, so it makes perfect sense to take the iPadOS and extract and expand it into what drives the Vision Pro, except using the aforementioned eye tracking, cameras and pinch gesture.

The reason the Vision Pro is capable of all of this is because they’ve effectively married the technology guts of an iPad into the chassis of the Vision Pro. This means that unlike many VR headsets which are dumb displays with very little processing power internally, the Vision Pro crams a whole iPad computer inside of the Vision Pro headset chassis.

That design choice is both good and bad. Let’s start with the good. Because the display is driven by an M2 chip motherboard design, like an iPhone or iPad, it has well enough power to do what’s needed to drive the Vision Pro with a fast refresh rate and with a responsive interface. This means a decent, friendly, familiar and easy to use interface. If you’re familiar with how to use an iPad or an iPhone, then you can drop right into the Vision Pro with little to no learning curve. This is what Apple is banking on, literally. The fact that because it’s so similar to their already existing devices makes it simple to strap one on and be up and running in just a few minutes.

Let’s move onto the bad. Because the processor system is built directly into the headset, that means it will become obsolete the following year of its release. As soon as Apple releases its next M2 chip, the Vision Pro will be obsolete. This is big problem. Expecting people to drop $3500 every 12 months is insane. It’s bad enough with an iPhone that costs $800, but for a device that costs $3500? Yeah, that’s a big no go.

iPhone and Vision Pro

The obvious design choice in a Vision Pro’s design is to marry these two devices together. What I mean by this marriage is that you’re already carrying around a CPU device capable of driving the Vision Pro headset in the palm of your hand. Instead, Apple should have designed their VR headset to be a thin client display device. What this means is that as a thin client, the device’s internal processor doesn’t need to be super fast. It simply needs to be fast enough to drive the display at a speed consistent with the refresh rates needed to be a remote display. In other words, turn the Vision Pro into a mostly dumb remote display device, not unlike a computer monitor, except using a much better wireless protocol. Then, allow all Apple devices to pair with and use the Vision Pro’s headset as a remote display.

This means that instead of carrying around two (or rather three, when you count that battery pack) hefty devices, the Vision Pro can be made much lighter and will run less hot. It also means that the iPhone will be the CPU device that does the hard lifting for the Vision Pro. You’re already carrying around a mobile phone anyway. It might as well be the driving force behind the Vision Pro. Simply connect it and go.

Removing all of that motherboard hardware (save a bit of processor power to drive the display) from inside the Vision Pro does several things at once. It removes the planned obsolescence issue around the Vision Pro and turns the headset into a display device that could last 10 years vs a planned obsolescence device that must be replaced every 12-24 months. Instead of replacing the headset each year, we simply continue replacing our iPhones as we always have. This business model fits right into Apple’s style.

A CPU inside of the headset will still need to be fast enough to read and understand the cameras built into the Vision Pro so that eye tracking and all of the rest of these technologies work well. However, it doesn’t need to include a full fledged computer. Instead, connect up the iPhone, iPad or even MacBook for the heavy CPU lifting.

Vision Pro Battery Pack

The second flaw of the Vision Pro is its hefty and heavy battery pack. The flaw isn’t the battery pack itself. It’s the fact that the battery pack should have been used to house the CPU and motherboard, instead of inside the Vision Pro headset. If the CPU main board lived in the battery pack case, it would be a simple matter to replace the battery pack with an updated main board each year, not needing to replace the headset itself. This would allow updating the M2 chip regularly with something faster to drive the headset.

The display technology used inside the Vision Pro isn’t something that’s likely to change very often. However, the main board and CPU will need to be changed and updated frequently to increase the snap and performance of the headset, year over year. By not taking advantage of the external battery pack case to house the main board along with the battery, which must be carried around anyway, this is a huge design flaw for the Vision Pro.

Perhaps they’ll consider this change with the Vision Pro 2. Better, make a new iPhone that serves to drive both the iPhone itself and the Vision Pro headset with the iPhone’s battery and using the CPU built into the iPhone to drive the Vision Pro device. By marrying the iPhone and the Vision Pro together, you get the best of both worlds and Apple gets two purchases at the same time… an iPhone purchase and a Vision Pro purchase. Even an iPad should be well capable of driving a Vision Pro device, including supplying power to it. Apple will simply need to rethink the battery sizes.

Why carry around that clunky battery thing when you’re already carrying around an iPhone that has enough battery power and enough computing power to drive the Vision Pro?

Clunky Headset

All VR headsets are clunky and heavy and sometimes hot to wear. The worst VR headset I’ve worn is, hands down, the PSVR headset. The long clunky cables in combination with absolutely zero ventilation and its heavy weight makes for an incredibly uncomfortable experience. Even Apple’s Vision Pro suffers from a lot of weight hanging from your cheeks. To offset that, Apple does supply an over-the-head strap that helps distribute the weight a little better. Even still, VR headset wearing fatigue is a real thing. How long do you want to wear a heavy thing resting on your cheekbones and nose that ultimately digs in and leaves red marks? Even the best padding won’t solve this fundamental wearability problem.

The Vision Pro is no different in this regard. The Vision Pro might be lighter than the PSVR, but that doesn’t make it light enough not to be a problem. But, this problem cuts Apple way deeper than this.

Closing Yourself Off

The fundamental problem with any VR headset is the closed in nature of it. When you don a VR headset, you’re closing yourself off from the world around you. The Vision Pro has opted to include the questionable choice of an aimed spatial audio system. Small slits in the side of the headset aim audio into the wearer’s ears. The trouble is, this audio can be heard by others around you, if even faintly. Meaning, this extraneous audio bleed noise could become a problem in public environments, such as on a plane. If you’re watching a particularly loud movie, those around you might be disturbed by the Vision Pro’s audio bleed. To combat this audio bleed problem, you’ll need to buy some Airpods Pro earbuds and use these instead.

The problem is, how many people will actually do this? Not many. The primary design flaw was in offering up an aimed, but noisy audio experience by default instead of including a pair of Airpods Pro earbuds as the default audio experience when using the Vision Pro. How dumb did the designers have to be to not see the problem coming? More than likely, some airline operators might choose to restrict the use of the Vision Pro entirely on commercial flights simply to avoid the passenger conflicts that might ensue because the passenger doesn’t have any Airpods to use with them. It’s easier to tell passengers that the device cannot be used at all instead of trying to fight with the passenger about putting in Airpods that they might or might not have.

It goes deeper than this, though. Once you don a headset, you’ve closed yourself off. Apple has attempted to combat the closed of nature of a VR headset by offering up front facing cameras and detecting when to allow someone to barge into the VR world and have a discussion with the wearer. This is an okay idea so long as enough people understand that this barge-through idea exists. That will take some getting used to, both for the Vision Pro wearer, but also for the person trying to get the wearer’s attention. That assumes that barge-through even works well enough to do that. I suspect that the wearer will simply need to remove the headset to have a conversation and then put it back on to resume whatever they were previously doing.

Better Design Choice

Instead of a clunky closed off VR headset, Apple should have focused on a system like the Google Glass product. Google has since discontinued the production of Google Glass, mostly because it really didn’t work out well, but that’s more because of Google itself and not of the idea behind the product.

Yes, a wearable display system could be very handy, particularly with a floating display in front of the vision of the user. However, the system needs to work in a much more open way, like Google Glass. Because glasses are an obvious solution to this, having a floating display in front of the user hooked up to a pair of glasses makes the most obvious sense. Glasses are light and easy to use. They can be easily put on and taken off. Glasses are easy to store and even easier to carry. Thick, heavy VR headsets are none of these things.

Wearing glasses keeps the person aware of their surroundings, allowing for talking to and seeing someone right in front of you. The Vision Pro, while it can recreate the environment around you with various cameras, still closes off the user from the rest of the world. Only Apple’s barge-through system, depending on its reliability, has a chance to sort-of mitigate this closed off nature. However, it’s pretty much guaranteed that the barge-through system won’t work as well as wearing a technology like Google Glass.

For this reason, Apple should have focused on creating a floating display in front of the user that was attached to a pair of glasses, not to a bulky and clunky headset. Yes, the Vision Pro headset is quite clunky.

Front Facing Cameras

You might be asking, if Google Glass was such a great alternative to a bulky headset, why did Google discontinue it? Simple, privacy concerns over the front facing camera, which led to a backlash. Because Google Glass shipped with a front facing camera enabled, anyone wearing it, particularly when entering a restaurant or bar, could end up recording the patrons in that establishment. Because restaurants and bars are privately owned spaces, all patron privacy needs to be respected. To that end, owners of restaurants and bars ultimately barred anyone wearing Google Glass devices from using them in the establishment space.

Why is this important to mention? Because Apple’s Vision Pro may suffer the same fate. Because the Vision Pro also has front facing cameras, cameras that support the barge-through feature among other potential privacy busting uses, restaurants and bars again face the real possibility of another Google Glass like product interfering with the privacy of their patrons.

I’d expect Apple to fare no better in bar and restaurant situations than Google Glass. In fact, I’d expect those same restaurants and bars that banned Google Glass wearers from using those devices to likewise ban any users who don a Vision Pro in their restaurants or bars.

Because the Vision Pro is so new and because restaurant and bar owners aren’t exactly sure how the Vision Pro works, know that if you’re a restaurant or bar owner, the Vision Pro has front facing cameras that record input all of the time, just like Google Glass. If you’ve previously banned Google Glass use, you’ll probably want to ban the use of Vision Pro headsets in your establishment for the same reasons as the ban on Google Glass. Because you can’t know if a Vision Pro user has or has not enabled a Persona, it’s safer to simply ban all Vision Pro usage than trying to determine if the user has set up a Persona.

Why does having a Persona matter? Once a Persona is created, this is when the front facing cameras run almost all of the time. If a Persona has not been created, the headset may or may not run the front facing cameras. Once a Persona is created, the front facing LED display creates a 3D virtual representation of the person’s eyes using the 3D Persona (aka. avatar). What you’re seeing in the image of the eyes is effectively a live CGI created image.

Why does having a Persona matter? Once a Persona is created, this is when the front facing cameras run almost all of the time. If a Persona has not been created, the headset may or may not run the front facing cameras. Once a Persona is created, the front facing LED display creates a 3D virtual representation of the person’s eyes using the 3D Persona (aka. avatar). What you’re seeing in the image of the eyes is effectively a live CGI created image.

The Vision Pro is claimed by Apple not to run the front cameras without a Persona created, but bugs, updates and whatnot may change the reality of that statement from Apple. Worse, though, is that there’s no easy way to determine if the user has created a Persona. That’s also not really a restaurant staff or flight attendant job. If you’re a restaurant or bar owner or even a flight attendant, you must assume that all users have created a Persona and that the front facing cameras are indeed active and recording. There’s no other stance to take on this. If even one user has created a Persona, then the assumption must be that the front facing cameras are active and running on all Vision Pro headsets. Thus, it is wise to ban the use of Apple’s Vision Pro headsets in and around restaurant and bar areas and even on airline flights… lest they be used to surreptitiously record others.

Here’s another design flaw that Apple should have seen coming. It only takes about 5 minutes to read and research Google Glass’s Wikipedia Page and its flaws… and why it’s no longer being sold. If Apple’s engineers had done this research during the design phase of the Vision Pro, they might have decided not to include front facing cameras on the Vision Pro. Even when the cameras are supposedly locked down and unavailable, that doesn’t preclude Apple’s own use of these cameras when someone is out and about used solely for Apple’s own surveillance purposes. Restaurant owners, beware. All of Apple’s assurances mean nothing if a video clip of somebody in your establishment surfaces on a social media site recorded via the Vision Pro’s front cameras.

Better Ideas?

Google Glass represents a better technological and practical design solution; a design that maintains an open visual field so that the user is not closed off and can interact and see the world around them. However, because Google Glass also included a heads up display in the user’s vision, some legislators took offense to the possibility of the user becoming distracted by the heads up display that they could attempt to operate a motor vehicle dangerously while distracted. However, there shouldn’t be a danger of this situation when using a Vision Pro, or at least one would hope not. However, because the Vision Pro is capable of creating a live 3D image representation of what’s presently surrounding the Vision Pro user, inevitably someone will attempt to drive a car while wearing a Vision Pro and all of these legislative arguments will resurface… in among various lawsuits should something happen while wearing it.

Circling Back Around

Let’s circle around to the original question asked by this article. Is the Vision Pro worth the money?

Considering its price tag and its comparative functional sameness to an iPad and to other similar but less expensive VR headsets, not really. Right now, the Vision Pro doesn’t sport a “killer app” that makes anyone need to run out and buy one. If you’re looking for a device with a 3D stereoscopic display that acts like an iPad and that plays nice in the Apple universe, this might suffice… assuming you can swallow the hefty sticker shock that goes with it.

However, Apple more or less overkilled the product by adding the barge-through feature requiring the front facing camera(s) and the front facing mostly decorative lenticular 3D display, solely to support this one feature “outside friendly” feature. Yes, the front facing OLED lenticular display is similar to the Nintendo 3DS’s 3D lenticular display. The lenticular feature means that you probably need to stand in a very specific position for the front facing display to actually work correctly and to display 3D in full, otherwise it will simply look weird. The front facing display is more or less an expensive, but useless display addition to the wearer. It’s simply there as a convenience to anyone who might walk buy. In reality, this front display is a waste of money and design dollars, simply to add convenience to anyone who might happen along someone wearing this headset. Even then, this display remains of almost no use until the user has set up their Persona.

Once the wearer has set up a Persona, the unit will display computer generated 3D eyes on the display at times, similar to the image above. When the eyes actually do appear, they appear to be placed at the correct distance on the face using a 3D lenticular display to make it appear like the real 3D eyes of the user. The 3D lenticular display doesn’t require glasses to appear 3D because of the lenticular technology. However, the virtual Persona created is fairly static and falls rather heavily into the uncanny valley. It’s just realistic enough to elicit interest, but just unrealistic enough to feel creepy and weird. Yes, even the eyes. This is something that Apple usually nails. However, this time it seems Apple got the Persona system wrong… oh so wrong. If Apple had settled on a more or less cartoon-like figure with exaggerated features, the Persona system might have worked better, particularly if it used anime eyes or something fun like that. When it attempts to mimic the real eyes of the user, it simply turns out creepy.

In reality, the front facing display is a costly lenticular OLED addition that offers almost no direct benefits to the Vision Pro user, other than being a costly add-on. However, the internal display system per eye within the Vision Pro sports around 23 million pixels between both eyes and around 11.5 million pixels per eye, which is slightly less than a 5K display per eye, but more than a 4K display per eye. When combined with both eyes, the full resolution allows for the creation of a 4K floating display. However, the Vision Pro would not be able to create an 8K floating display due to its lack of pixel density. The Vision Pro wouldn’t even be able to create a 5K display for this same density reason.

Because many 5K flat and curved LCD displays are now priced under $800 and are likely to drop in price even further, that means you can buy two 5K displays for less than than half the cost of one Vision Pro headset. Keep in mind that these are 5K monitors. They’re not 3D and they’re relatively big in size. They don’t offer floating 3D displays appearing in your vision and there are limits to a flat or curved screen. However, if you’re looking for sheer screen real estate for your computing work, buying two 5K displays would offer a huge amount of screen real estate for managing work over the Vision Pro. By comparison, you’d honestly get way more real estate with real monitors compared to using the Vision Pro. Having two monitors in front of you is easier to navigate than being required to look up, down and left and right and perhaps crane your neck to see all of the real estate that the Vision Pro affords… in addition to getting the hang of pinch controls.

The physical monitor comparison, though, is like comparing apples to oranges when compared with a Vision Pro headset (in many ways). However, this comparison is simply to show you what you can buy for less money. With $3400 you can buy a full computer rig including a mouse, keyboard, headphones and likely both of those 5K monitors for less than the cost of a single Vision Pro headset. You might even be able to throw in a gaming chair. Keeping these buying options in perspective keeps you informed.

The Bad

Because the headset offers a closed and private environment that only the wearer can see, this situation opens the doors to bad situations if using it in a place of business or even if out in public. For example, if an office manager were to buy their employee a Vision Pro instead of a couple of new and big monitors, simply because the Vision Pro is a closed, private environment, there’s no way to know what that worker might be doing with those floating displays. For example, they could be watching porno at the same time as doing work in another window. This is the danger of not being able to see and monitor your staff’s computers, if even by simply walking by. Apple, however, may have added a business friendly drop-in feature to allow managers to monitor what employees are seeing and doing in their headsets.

You can bet that should a VR headset become a replacement for monitors in the workplace, many staff will use the technology to surf the web to inappropriate sites up to and including watching porn. This won’t go over well for either productivity of the employee or the manager who must manage that employee. If an employee approaches you asking for a Vision Pro to perform work, be cautious when considering spending $3500 for this device. There may be some applicable uses for the Vision Pro headset in certain work environments, but it’s also worth remaining cautious for the above reasons when considering such a purchase for any employee.

On the flip side, for personal use, buy whatever tickles your fancy. If you feel justified in spending $3500 or more for an Apple VR headset, go for it. Just know that you’re effectively buying a headset based monitor system.

Keyboard, Eye Tracking and The Pinch